Employees in most organisations are already using AI tools. Generative assistants, chatbots built on large language models, writing and document summarisation tools — artificial intelligence has entered everyday work so quickly that data protection procedures and policies have not kept pace with reality.

The result? In many organisations, employees paste client data, contract contents, national ID numbers or medical records into public AI tools — unaware that they may be violating GDPR and disclosing trade secrets in the process.

In this article we explain:

- why sharing personal data with external AI tools carries GDPR risk,

- how AI tool providers treat data entered by users,

- what obligations a data controller has,

- and how to implement a practical AI policy in your organisation.

Why generative AI and GDPR concerns every organisation

Generative AI tools operate in the cloud. Every query — every prompt — is sent to the provider’s servers. In the case of public versions of these tools, data entered by the user may be used for further model training or processed by an entity based outside the European Economic Area.

GDPR applies whenever a prompt contains data that can identify a natural person. This includes: a client’s name, email address, phone number, national identification number, health data, employee performance review results, or contract details — the list is longer than most users instinctively assume.

The European Data Protection Board (EDPB) has established a dedicated taskforce on generative AI tools to coordinate enforcement actions by data protection authorities across the EU. In 2025, the EDPB expanded its mandate to cover AI enforcement more broadly. European supervisory authorities are aligned: using external AI tools to process personal data without an appropriate legal basis and a data processing agreement is a GDPR violation.

Who is the controller, who is the processor?

This is one of the key questions every organisation using external AI tools must address.

When an employee uses a public AI tool and pastes personal data into it, the organisation becomes the data controller — and is responsible for the fact that the data reached an external system. The AI tool provider may be treated as a data processor in this scenario, which means a data processing agreement (DPA) should be in place between the organisation and the provider. Without one, the processing does not comply with Article 28 GDPR.

Some AI tool providers offer organisations a DPA as part of their business or enterprise terms of service. Consumer-facing free accounts typically do not include such an agreement. This is a significant distinction that every organisation should verify before allowing employees to use a given tool for work purposes involving personal data.

Regardless of the tool — the data controller must know what data is being processed, for what purpose, and on what legal basis.

What must never be shared with external AI tools

The following categories of data should never be entered into public AI tools without first verifying the provider’s privacy policy and putting an appropriate agreement in place:

Identifying personal data — names, national identification numbers, passport or ID numbers, home addresses of clients or employees.

Contact data — email addresses or phone numbers linked to an identifiable individual.

Special category data — health information, medical test results, biometric data, trade union membership, religious or political beliefs.

Contract and HR data — contract contents that include the parties’ personal data, HR proceedings records, recruitment data.

Financial data — account numbers, transaction histories linked to an individual.

A practical rule of thumb: if the prompt still makes sense and is useful after removing personal data — remove it before submitting. If the tool needs the personal data to complete the task, stop and assess whether an appropriate legal basis and provider agreement are in place.

Obligations of the organisation as data controller

Allowing employees to use external AI tools with no rules in place is a violation of the accountability principle under Article 5 GDPR. A data controller is required to actively manage risk — not merely respond after the fact.

In practice, this means several concrete steps:

Updating the record of processing activities. If AI tools are used in the organisation to process personal data, those activities should be recorded with the applicable legal basis, purpose, and recipients — including entities outside the EEA.

Verifying agreements with providers. For every AI tool used in connection with personal data, confirm whether a data processing agreement exists. If the provider does not offer one, using the tool for personal data processing does not comply with GDPR.

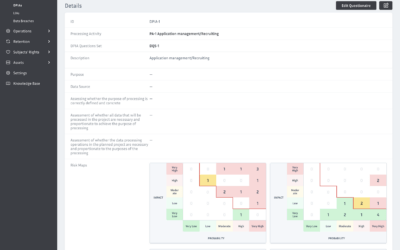

Risk assessment. Before deploying an AI tool in any process involving personal data, carry out a risk assessment — and in justified cases, a full Data Protection Impact Assessment (DPIA).

Data transfers outside the EEA. If the AI tool provider’s servers are located outside the European Economic Area, transferring data there requires appropriate safeguards — such as Standard Contractual Clauses (SCCs).

AI policy in the organisation — where to start

The absence of a policy for using external AI tools is the most common organisational failure in this area. Employees use these tools because they are convenient and there are no guidelines — not because they intend to violate GDPR.

A sound AI policy should answer several basic questions:

- Which AI tools are approved for work use?

- Which categories of data must never be entered into any external AI tool?

- Which tools have a DPA in place with the organisation and may be used with personal data?

- Who approves the introduction of a new AI tool into a business process?

- How will employees be trained on this topic?

The policy does not need to be a lengthy document. What matters is that it is clear, accessible to employees, and regularly updated — because the AI tool landscape changes faster than most organisational procedures.

AI Act and GDPR — what is changing

The EU Artificial Intelligence Act entered into force in August 2024. From 2 August 2025, provisions covering general-purpose AI models — the technology that underlies most popular generative AI tools — began to apply.

The AI Act and GDPR operate in parallel and complement each other. GDPR governs what happens to personal data — regardless of whether it is processed by an AI system or not. The AI Act places additional obligations on AI system providers: transparency, documentation, risk management. For organisations using off-the-shelf AI tools, the obligations arising from GDPR remain the primary concern — and the AI Act reinforces the case for taking them seriously.

Summary

Using AI tools in the workplace is not prohibited — but it requires awareness and appropriate procedures. GDPR does not ban the use of external AI systems, but it places on the data controller the obligation to ensure that personal data is processed lawfully, on an appropriate legal basis, and with proper safeguards in place.

Key principles to keep in mind:

- personal data should not be entered into external AI tools without verifying a DPA with the provider,

- the organisation as controller is responsible for what employees submit to AI tools in a work context,

- AI tools used to process personal data should be reflected in the record of processing activities,

- transfers of data to providers outside the EEA require appropriate safeguards,

- an AI usage policy should form part of the organisation’s GDPR documentation.

If your organisation does not yet have procedures in place in this area, a good starting point is a review of which AI tools are actually being used and whether appropriate agreements with providers exist.

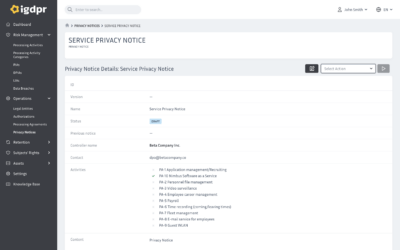

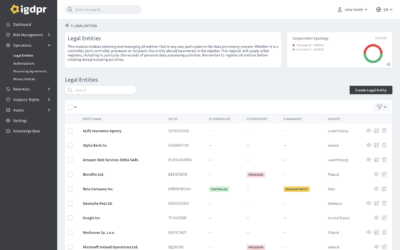

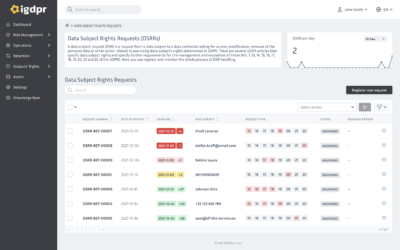

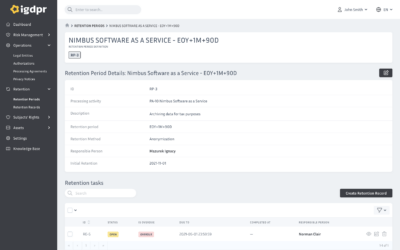

Manage GDPR in an AI-powered organisation — all in one place

iGDPR helps you maintain your record of processing activities, manage agreements with data processors (including AI tool providers), carry out risk assessments, and control access authorisations. See how it works in practice.

START FREE TRIAL — 21 days, no commitment